In this report:

- Introduction

- The acceleration of AI: Adoption, priority and momentum

- The new economics of AI: Spend, waste and the discipline gap

- Governance, risk and the visibility mandate

- Realizing AI value: Scaling responsibly, measuring impact and turning clarity into ROI

- Vertical snapshots: How industries turn AI into real ROI

- AI value isn’t inevitable. It’s engineered.

- Methodology

- FAQ

Artificial intelligence (AI) adoption is accelerating fast. Spend is climbing. Waste is real. Agentic AI and large language models (LLMs) have pushed adoption beyond what most teams were ready to govern. Visibility gaps, unmanaged usage, inconsistent procurement and opaque SaaS behavior are already creating financial, operational and compliance risks that will only grow without action.

The inaugural Flexera 2026 AI Pulse Report offers a data-driven look at how organizations are managing these challenges. As AI expands across cloud, SaaS and business operations, new obstacles like rising costs, unpredictable workloads and governance gaps have emerged. Drawing on Flexera’s global research, this report highlights how organizations are integrating AI and addressing these evolving risks.

Forecasts show the scale of what’s ahead. Gartner expects worldwide AI spending to reach $2.52 trillion in 2026—a 44% year‑over‑year (YoY) increase. Analysts see this as both confirmation of long‑term demand and a reminder that governance must grow with spend.

But none of that changes what leaders need to do now

- Own clarity of your business goal

- Codify governance

- Orchestrate ROI

Shadow AI, data governance concerns and inconsistent oversight are becoming top barriers even as myriad industries begin to identify where AI can truly transform outcomes. Flexera’s 2026 AI Pulse Report captures this pivotal moment—one defined not only by rapid innovation, but by the growing need to control, measure and operationalize AI for long‑term advantage.

Markets fluctuate, capabilities compound. Your job isn’t to time the hype; it’s to manage the risk

The acceleration of AI: Adoption, priority and momentum

Key takeaways

- AI has rapidly transitioned from experimentation to widespread adoption, significantly influencing IT strategies and becoming the top technology priority for organizations in 2026

- Adoption velocity is exceptionally high, with 99% of organizations using or experimenting with generative AI (GenAI) and a majority actively integrating AI and machine learning services into their technology stacks

- While AI spending is surging, a substantial portion is wasted—36% of organizations report overspending on AI applications, and 14% cite wasted AI spend, highlighting the need for structured governance and outcome measurement

AI is a top IT priority for 2026

AI has moved beyond experimentation and hype cycles and into a period of historic acceleration. Across Flexera’s global research portfolio, the evidence is consistent: AI isn’t coming. It’s here, it’s multiplying, and it’s shaping IT strategy more forcefully than any technology shift in a generation.

But AI won’t prove its value without structure. Leaders need to focus on tying spend to outcomes using unit economics, setting scale-up gates tied to KPIs, measuring AI workloads like FinOps measures cloud and avoiding overbuying tools before value is evident.

The lesson from the cloud still applies: Just as hybrid cloud needed visibility and governance to deliver business value, AI requires the same disciplined approach.

Value isn’t assumed—it’s measured

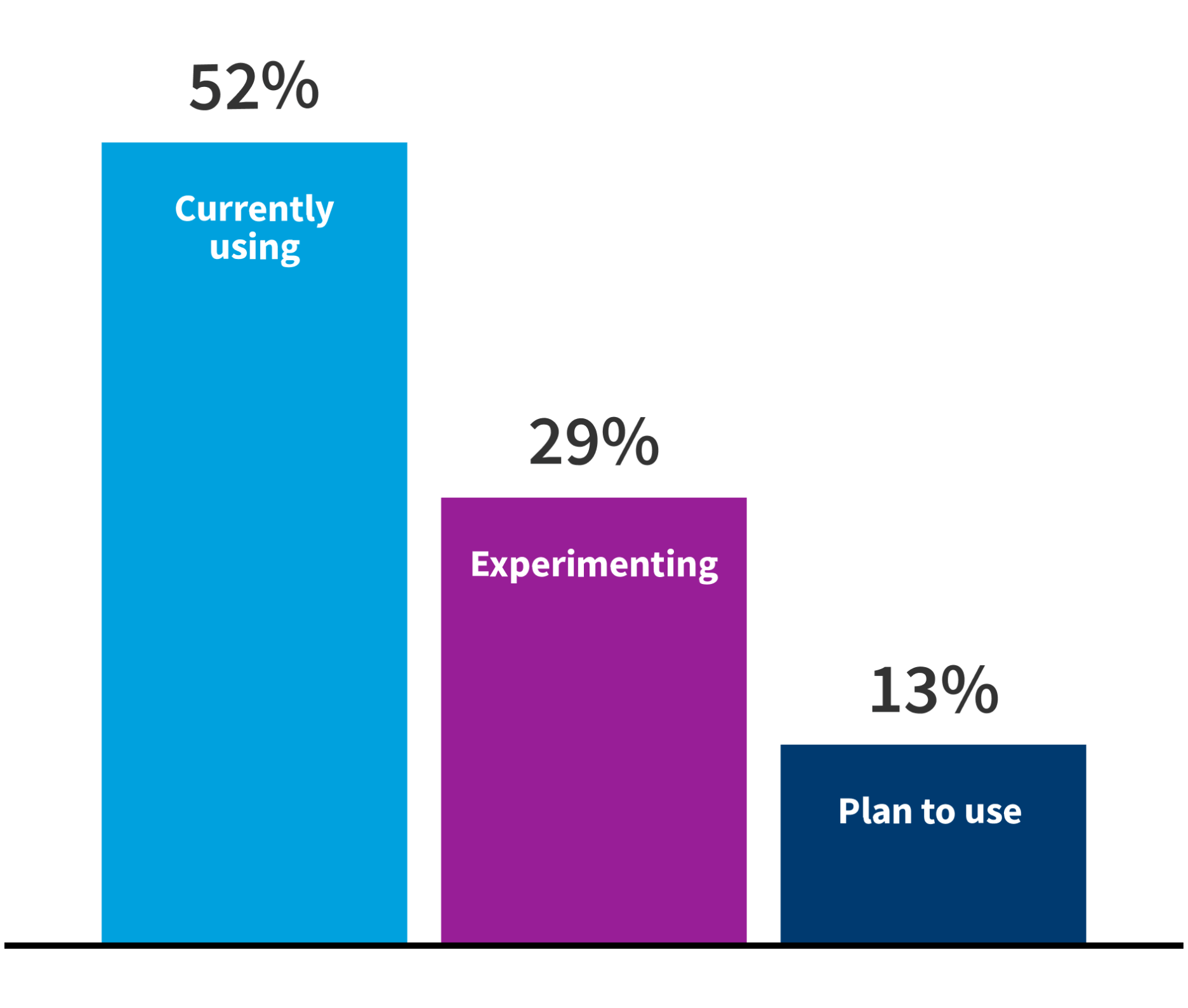

AI adoption velocity is blowing past previous predictions

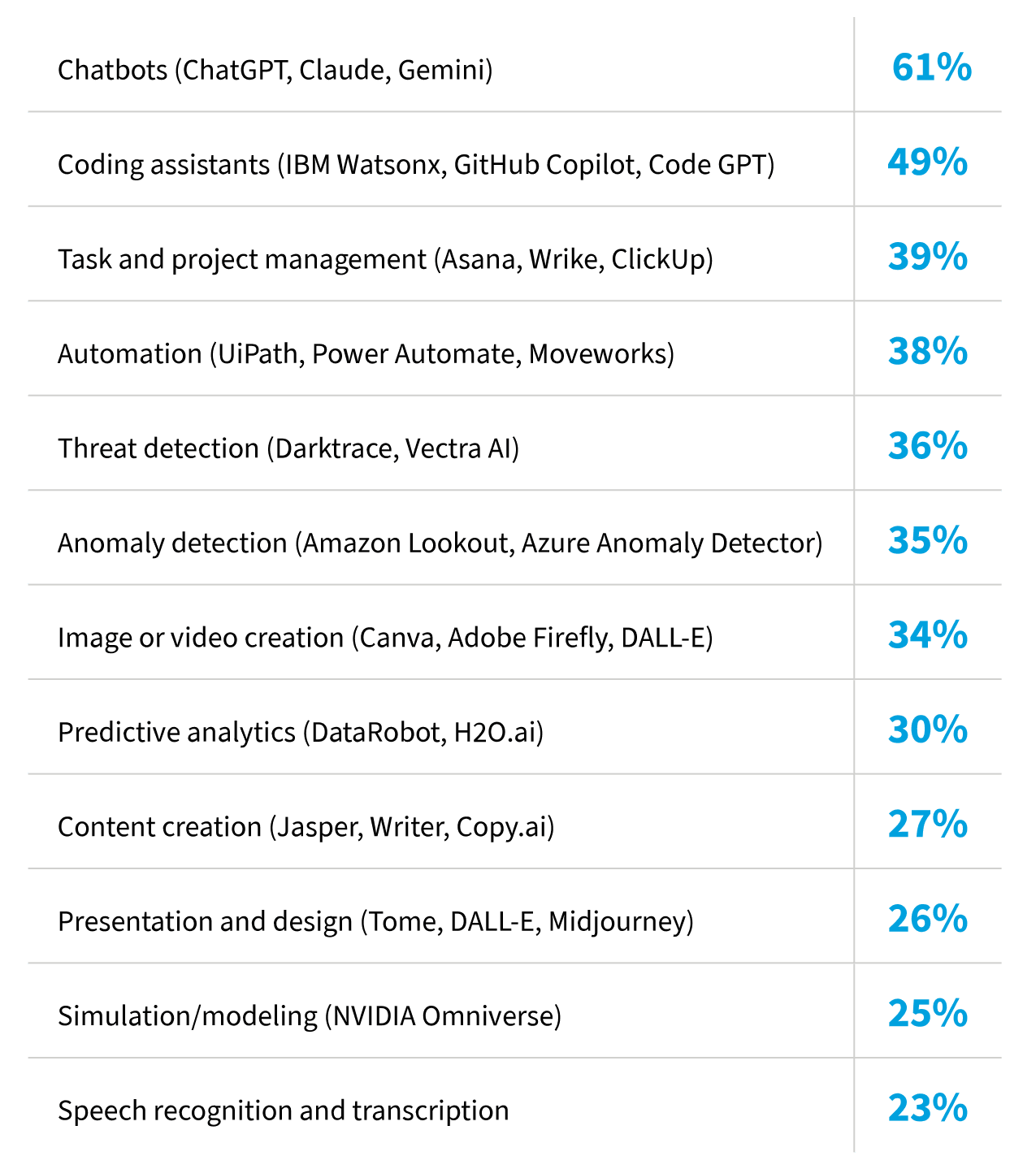

Adoption is accelerating even faster than the spend curves suggest. Across Flexera’s cloud and IT priorities data sets, 81% of organizations are using or experimenting with AI and machine learning (ML); 61% say they’re actively using Chatbots like ChatGPT, Claude and Gemini; and according to The Flexera 2026 IT Priorities Report, nearly all (94%) of IT decision-makers look for new ways to integrate AI into their technology stack.

Usage of artificial intelligence (non-GenAI)/ machine learning

What are your organization’s IT priorities for the year ahead?

But the impact isn’t limited to model usage. Heavyweight analytics that are now gaining executive attention, like Databricks and Snowflake, are now central to many enterprise AI roadmaps—and they add significant cost pressure as teams scale data pipelines, model training and inference workloads. Their growing footprint underscores how quickly AI initiatives reshape both architecture and spend.

If you asked me two years ago about my Databricks spend, I would’ve said it was just one of dozens or hundreds of software suppliers we have. Now, I can tell you that our number two supplier is Databricks and I know exactly what I spend there because we’ve spent hours working with our product management and engineering teams trying to predict what our consumption is going to look like for the next three years.”

—Jim Ryan, Flexera CEO

See how you can autonomously optimize your data cloud costs

GenAI adoption trends: What the data says

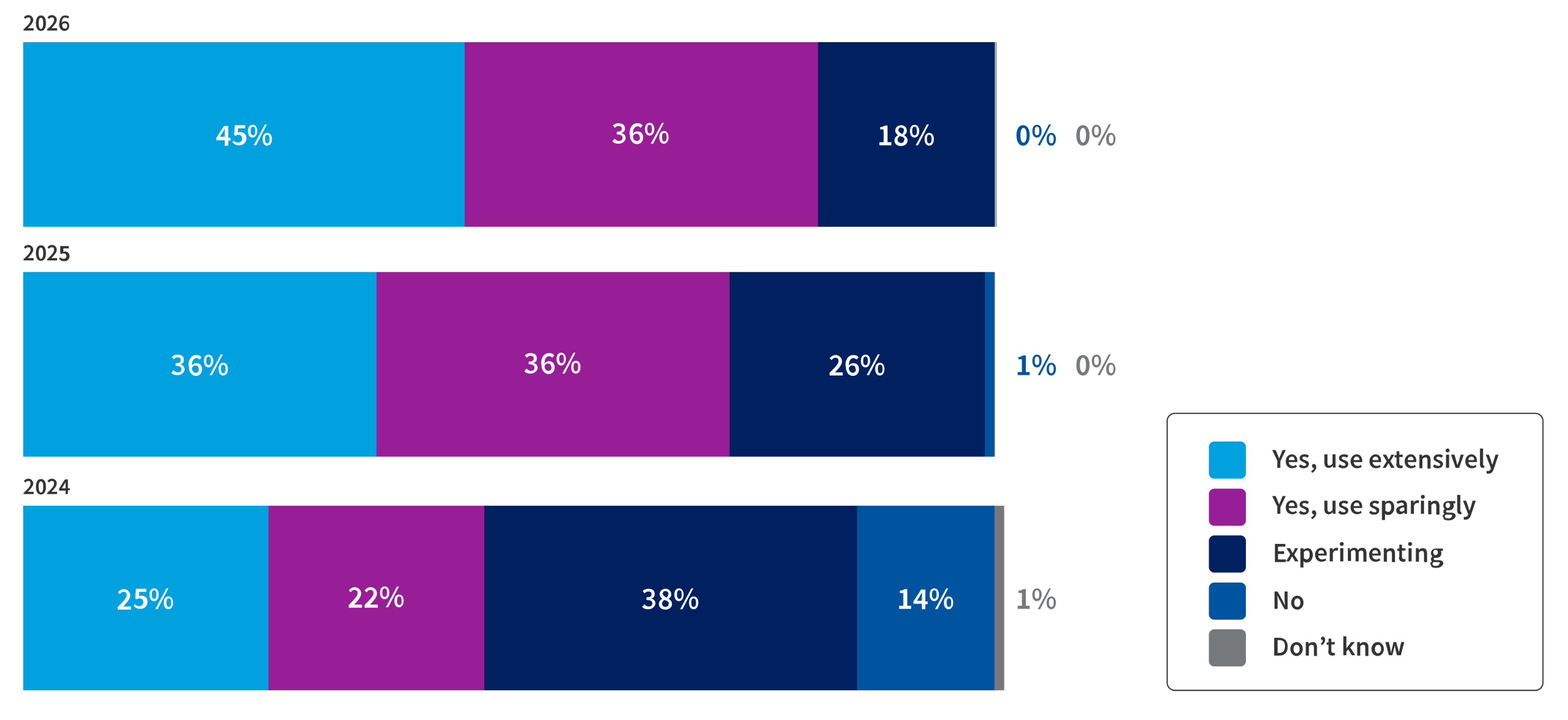

The Flexera 2026 State of the Cloud reinforces the same acceleration curve, showing significant increases in GenAI usage. It has now moved from curiosity to critical capability, surfacing in development workflows, security and operations automation, customer-facing experiences and embedded within SaaS platforms.

Use of generative AI (GenAI) public cloud services

The AI wave cannot be slowed—but it can be governed

The new economics of AI: Spend, waste and the discipline gap

Key takeaways

- AI spending is rising rapidly, along with waste. Budgets are growing, but much of the spending is hard to justify or track due to unpredictable usage and uncontrolled tool adoption

- Managing AI costs is highly unpredictable. Standard cloud cost controls don’t fit AI’s volatile workloads and shifting pricing, making financial management difficult

- Visibility is crucial. Without clear oversight of AI tools and spending, organizations risk uncontrolled costs and policy gaps. Unified visibility enables better governance and value

AI spend is surging, but so is waste

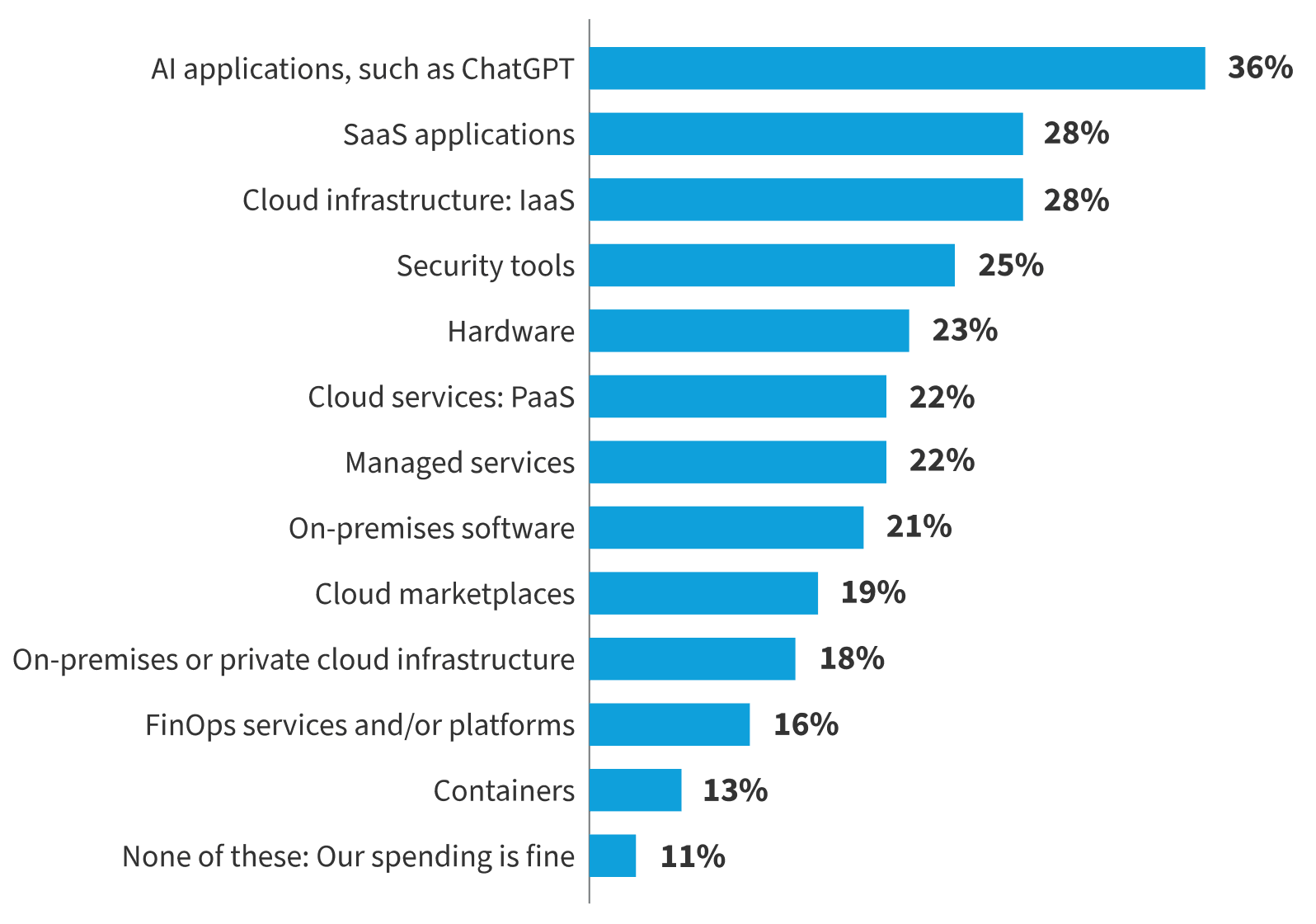

AI adoption is rising at unprecedented speed—and spend is climbing just as fast. In many organizations, waste is keeping pace. Eighty percent of leaders report that they have increased their AI investments. That being said, 36% say they spent too much on AI applications, and 14% report wasted AI spend.

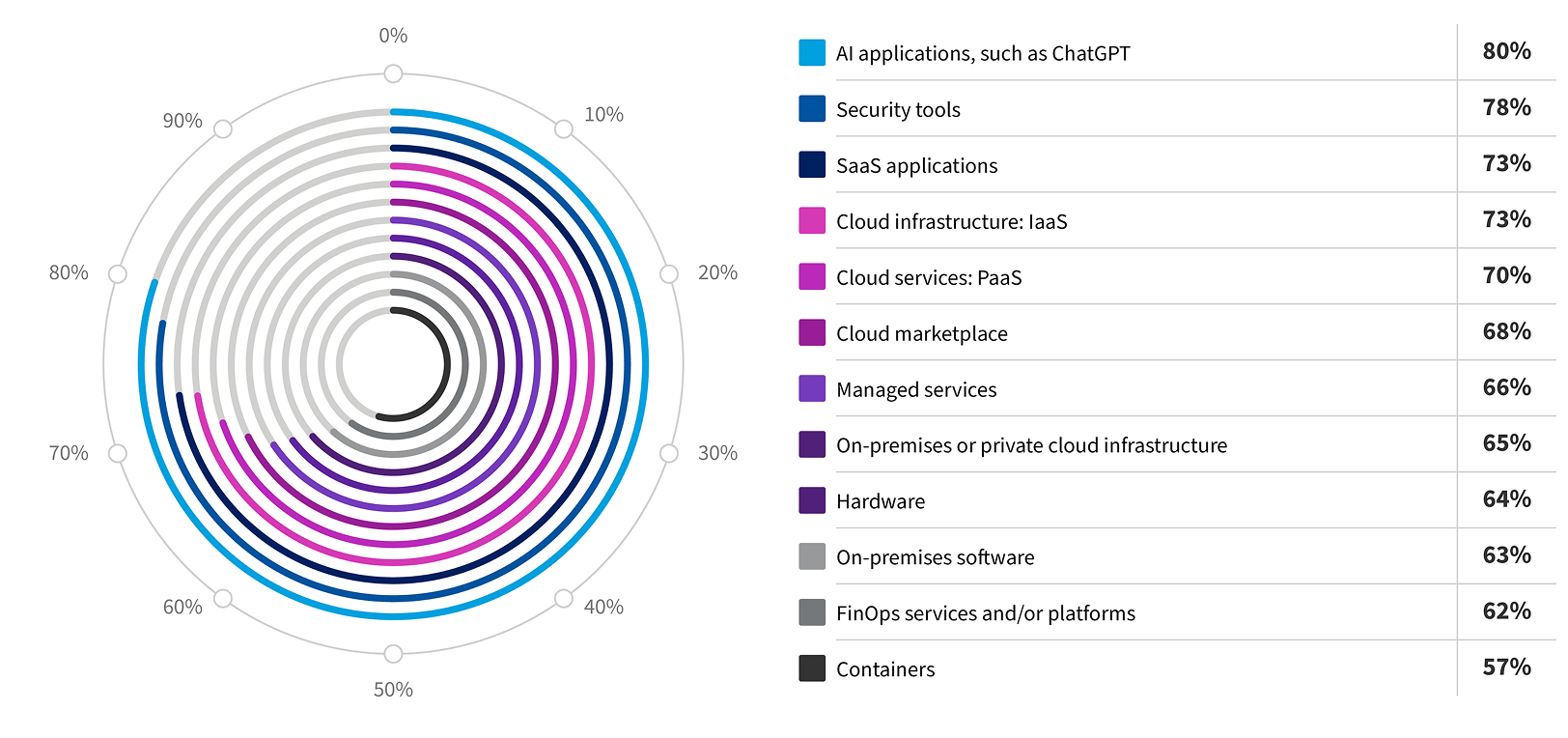

Respondents who indicated their investment in the following technologies increased over the past twelve months

Where, if at all, are you currently overspending on technology?

This creates a challenging dynamic: AI is the top priority, budgets are expanding and yet a meaningful share of spend can’t be justified or even fully tracked. Much of the overspend stems from rapidly shifting licensing and consumption models, unpredictable usage patterns, uneven governance and proliferation of AI-powered SaaS tools acquired outside of IT’s view. In short, AI is accelerating faster than cost controls are.

AI cost unpredictability becomes one of the top cloud challenges

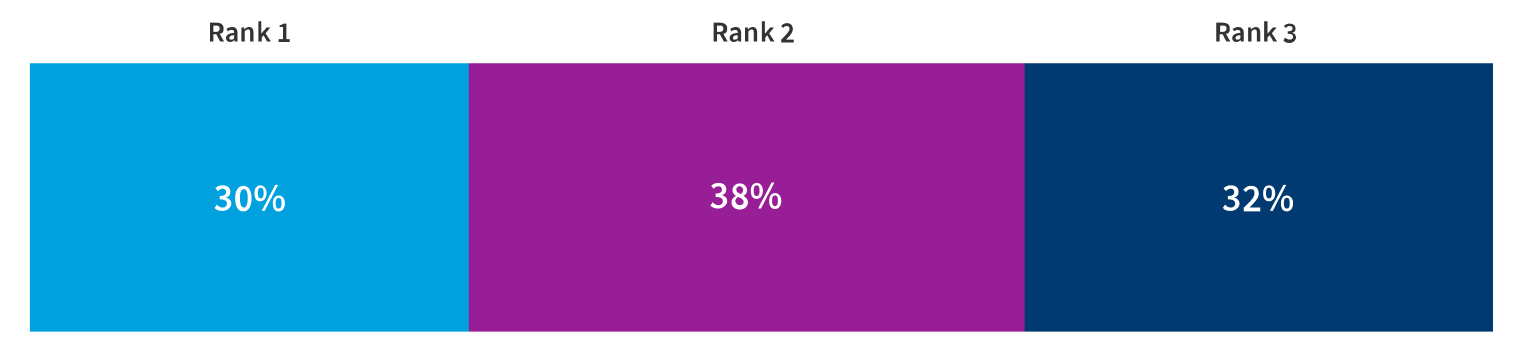

One aspect of the accelerating costs is that AI is changing cloud spend in ways that are increasingly hard to predict. Thirty percent of respondents listed cost management and unpredictability of AI cloud workloads as their number one challenge, 38% said it was their second top challenge and 32% ranked it as their third top challenge.

Cost management and unpredictability of AI cloud workloads

Top three challenges facing organizations when scaling AI workloads in the cloud

These ranks signal a structural issue: AI workloads don’t behave like traditional cloud workloads. Their consumption curves spike and shift in ways that break standard forecasting models. In practice, that means organizations need better cost attribution, policy-based guardrails for consumption, integrated insights and proactive procurement evaluation for AI-heavy platforms.

When unpredictability ranks as a universal top three challenge, it’s a clear warning sign. Without systemic visibility and governance, organizations are left reacting to AI costs instead of controlling them.”

—Conal Gallagher, CIO and CISO, Flexera

AI cost behavior diverges from traditional cloud patterns in four important ways

-

AI workloads burst instead of scale linearly

A single inference workflow can trigger massive compute draw, especially when models are retrained or fine-tuned -

SaaS AI pricing is volatile

Vendors are monetizing AI features through add-ons, usage tiers, bundled licenses and opaque metering -

AI projects often start in the shadows

When line of business teams experiment without visibility, spend becomes fragmented and untraceable -

AI tools compound quickly

Every new AI-powered SaaS tool added to the stack widens the financial attack surface

IT visibility gaps emerge as a defining risk to cost

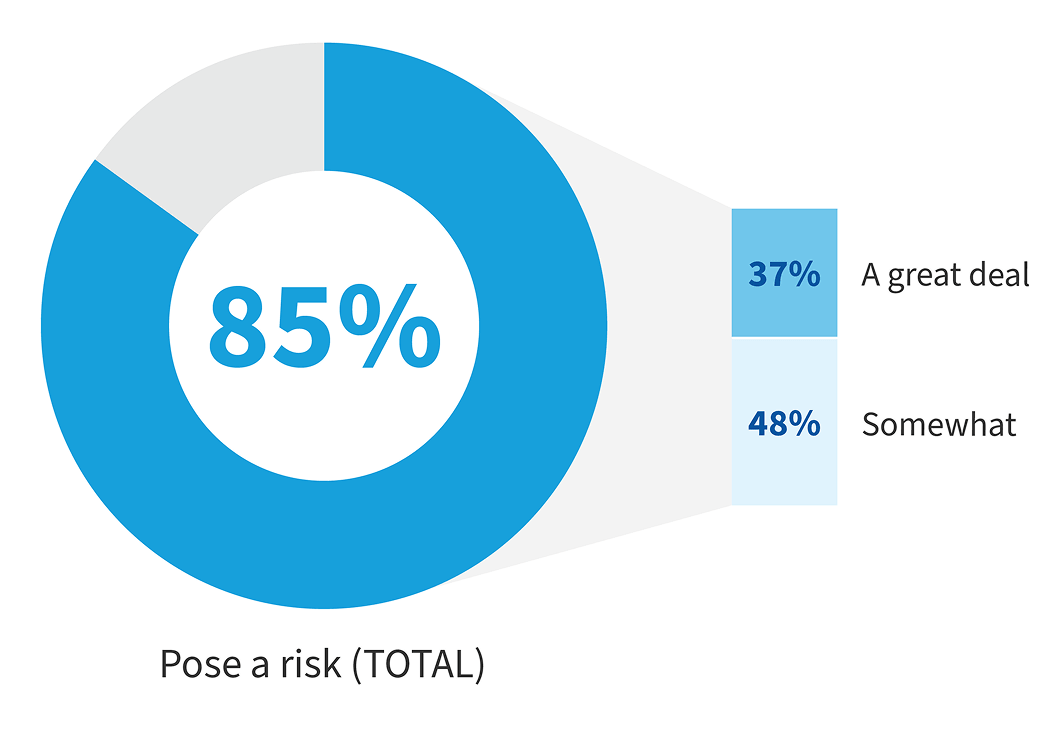

Visibility is a critical control point to expenses. According to The Flexera 2026 IT Priorities Report, 85% of organizations say gaps in IT visibility pose a major risk, and although 92% say their employees are using AI technology well, nearly half (45%) say they don’t always know how or when their employees are using AI tools.

This is especially compounded when they can’t see who’s using AI, which SaaS tools include embedded AI, what models and providers employees interact with, where data flows and which contracts include AI-related usage or terms. For the cloud, visibility was the foundation of FinOps maturity, and for AI it’s the foundation for everything: governance, cost management, compliance, risk reduction and value realization.

How much does having gaps in IT visibility pose a risk to your organization?

As adoption accelerates and spend rises, organizations operating without unified visibility face uncontrolled experimentation, rising spend without outcomes, inconsistent policy enforcement, increased compliance exposure and cascading SaaS risk. Visibility is the prerequisite for AI control.

AI visibility is no longer just an operational concern—it’s a board‑level risk. As AI adoption accelerates, the absence of clear insight into usage, data flows and contracts creates exposure that compounds faster than most governance models can respond.”

—Conal Gallagher, CIO and CISO, Flexera

Why traditional cost management frameworks fall short

AI’s financial turbulence is revealing the limits of legacy cost management. Traditional FinOps practices—such as tagging, rightsizing or reserved instances—were built for cloud services with predictable consumption curves. AI breaks those assumptions.

All of this compounds into overspend. AI workloads spike unpredictably, AI SaaS pricing shifts without transparency and experimentation often runs outside FinOps oversight. The result is a financial model that’s far more volatile than cloud ever was—and a clear signal that AI requires new disciplines to keep cost, value and risk in balance.

The path forward: Financial clarity built on unified visibility

The organizations that get ahead won’t rely on reactive cost control. They’ll shift to proactive financial instrumentation—whether they’re using AI tools across the business or building AI capabilities for employees or customers.

What organizations need when using AI tools

When teams adopt GenAI applications, AI‑powered SaaS, and AI features embedded in existing platforms, the priority is controlling consumption and license-driven cost risk. That requires:

- Comprehensive discovery of every AI tool in use across cloud accounts, SaaS portfolios and shadow applications

- Accurate spend attribution that separates AI SaaS fees, embedded AI surcharges and general subscription spend

- Dynamic forecasting that accounts for usage surges, data‑movement volatility and shifting vendor pricing

- Governance controls to manage data exposure, security policies and approved AI usage

What organizations need when building AI capabilities

When teams train, deploy or customize models, the financial surface area of AI expands. Costs shift from licensing to graphics processing unit (GPU) utilization, inference workloads and data pipelines. That requires:

- Attribution models that isolate inference, retraining and data‑processing costs from routine cloud compute

- Guardrails that respond to financial triggers such as anomalous GPU burn or runaway batch jobs

- Unified visibility across cloud, SaaS and custom AI workloads so governance, procurement and FinOps can act on the same data

Whether you’re consuming AI or creating it, the path forward is the same: visibility first, instrumentation second, governance tied to financial behavior throughout. You can’t optimize or control what you can’t see—and AI raises the stakes on both.

Governance, risk and the

visibility mandate

Key takeaways

- Rapid AI adoption is outpacing organizations’ abilities to monitor, govern and secure usage, resulting in oversight gaps and systemic risks

- Shadow AI can easily become widespread, making it increasingly difficult for organizations to maintain visibility and control, which heightens security and compliance challenges

- Effective governance, grounded in unified visibility, embedded policies and cross-functional collaboration, is essential to mitigate risks and ensure that AI delivers value without exposing the organization to liability

Shadow AI is no longer a fringe problem

Organizations are adopting AI faster than they can secure it, monitor it or even detect it—creating a widening gap between AI enthusiasm and AI oversight. The risks accumulating in that gap are no longer isolated; they’re becoming systemic. Organizations need the controls, visibility mechanisms and guardrails to protect themselves from security breaches, compliance failures, data exposure, intellectual property drift and uncontrolled AI usage.

As earlier mentioned, one sobering signal of how deeply shadow AI can penetrate the enterprise is from the 2026 Flexera IT Priorities Report, which unveiled that 45% of IT decision-makers said they don’t always know when employees are using AI.

Shadow AI emerges when employees test public GenAI tools, when SaaS platforms enable AI features by default, when browser‑based helpers slip into workflows or when unapproved plugins and assistants feel harmless. And while shadow IT was once limited to unsanctioned SaaS subscriptions, shadow AI is far harder to detect because prompts look like normal web traffic, AI features are embedded invisibly across SaaS products, vendors turn on capabilities without advance notice and many employees believe “just testing” carries no risk.

You can't defend against what you can’t see—and shadow AI is expanding faster than visibility controls can keep up. The result is a growing exposure surface with limited monitoring, limited governance and no guarantees that data, models or usage stay within approved boundaries.

Cloud-based AI security and compliance risks rise to the top

Fifty-three percent of respondents in Flexera’s 2026 State of the Cloud survey say security and compliance risks associated with cloud-based AI are their top challenge. Another 28% rank it as their second, and 20% rank it as their third top priority. This makes security and compliance the single most consistently cited AI challenge—outpacing cost, talent and operational hurdles.

Security and compliance risks associated with cloud-based AI

Top three challenges facing organizations when scaling AI workloads in the cloud

AI systems introduce entirely new exposure points. They process unprecedented volumes of sensitive data, generate content that may contain regulated or proprietary information, create data flows that bypass established controls and operate with opaque model behavior that’s difficult to assess. Security teams are now expected to safeguard:

- Data they can’t fully see

- Tools they didn’t procure

- Models they can’t inspect

- Vendors they can’t easily audit

- Usage patterns they can’t reliably trace

This isn’t just a technical challenge; it’s a governance gap. And as AI adoption accelerates, that gap is widening faster than traditional security models can respond.

Cloud‑based AI introduces new security and compliance risks because it operates beyond many existing guardrails. Without unified visibility and governance, those risks scale faster than most organizations realize.”

—Conal Gallagher, CIO and CISO, Flexera

External research reinforces the urgency

A 2025 Gartner survey found that 69% of cybersecurity leaders suspect or have evidence of employees using unauthorized public GenAI tools, leading to risks like IP loss, data exposure and security gaps; Gartner further predicts that by 2027, 40% of enterprises will face a security or compliance incident tied to shadow AI. Additional Gartner research shows AI-related information governance risks and shadow AI are rapidly climbing senior risk leaders’ priorities, echoing Flexera’s findings that AI adoption is outpacing organizations’ ability to govern it.

The speed of AI adoption has outpaced organizations’ ability to monitor, control and govern it—and the visibility gap is widening

Governance is the hinge: Visibility, then control—then trust

The highest‑performing organizations aren’t avoiding AI risk; they’re controlling it by treating governance as a core operating system, not an afterthought. Their frameworks are lighter, more operational and built for real‑time oversight. In order to create governance with authority—not governance in theory—organizations need effective visibility, policy embedded in the workflow, secure data governance, AI and compliance alignment and cross-functional governance. They also need AI model security—yet another aspect of governance increasing in importance. That includes how to keep models safe from prompt injection, model poisoning, adversarial attacks and more.

Visibility as the foundation

Effective governance starts with unified visibility across:

- AI and AI-powered discovery

- Model and dataset inventories

- User-level access and telemetry

- Automated detection of shadow AI

- Vendor risk profiles tied to procurement

Responsible AI and compliance alignment

Governance must align AI deployment with:

- Ethical frameworks

- Regulatory requirements

- Contractual safeguards

- Industry-specific standards such as HIPAA, GDPR and PCI

Policy embedded in the workflow

Governance must move out of binders and into the flow of work through:

- Policy-backed procurement gates

- Usage rules enforced in the application

- Controlled sandboxes for experimentation

- Role-based model interaction

- Automated blocks on high-risk tools

A cross-functional governance body

AI can’t be governed by IT alone. High-performing organizations bring together:

- IT and security

- Legal and compliance

- Data governance

- Procurement and Finance

- Engineering

- FinOps and ITAM

- Business unit leaders

Data governance that’s secure by design

Because AI touches more data than any prior technology wave, organizations need:

- Data classification and lineage

- Model access rules

- Zero-trust identity extended to AI systems

- Audit trails for prompts, outputs and derived data

- Encryption and compliance tracking

The cost of getting governance wrong

Without governance and visibility, organizations face escalating exposure: data leakage, regulatory penalties, intellectual property loss, untracked spend surges, model misuse, inconsistent outputs and uncontrolled contractual liabilities. Each of these issues is damaging on its own, but together they form a material organizational risk that compounds as AI adoption accelerates.

Realizing AI value: Scaling

responsibly, measuring impact

and turning clarity into ROI

Key takeaways

- To realize lasting AI value, organizations must scale responsibly by establishing strong governance, continuous measurement and clear alignment with business outcomes

- Measurement, visibility and financial controls are foundational—without them, even widespread AI adoption fails to deliver meaningful or measurable ROI

- Successful teams prioritize clarity, embed governance early and close visibility gaps to ensure that every AI deployment is accountable, traceable and drives tangible business results

The challenge now is moving from AI’s enormous promise to measurable results. The key isn’t just investing or experimenting—it’s scaling responsibly, tracking impact rigorously and establishing clear visibility from the outset. Without these foundations, even widespread AI deployment can fall short of driving meaningful business outcomes.

The core problem: AI adoption is high, but value realization is low

Across industries, leaders are rapidly integrating AI, but far fewer are actually capturing value. The issue isn’t a lack of usage—it’s a lack of guardrails around measurement, visibility and governance.

Cloud-based AI introduces new risks because it operates beyond many existing guardrails, and organizations don’t have the measurement in place they need. Without unified measurement, visibility and governance, those risks scale faster than most organizations realize.”

—Conal Gallagher, CIO and CISO, Flexera

As you may have realized from previous sections, data from Flexera’s research mirrors this pattern: AI investment is rising, experimentation is widespread, governance and visibility gaps persist and shadow AI continues to accelerate. Organizations are integrating AI tools, but they still struggle to connect usage to business KPIs, isolate which deployments drive ROI or measure whether initiatives deliver meaningful outcomes. This isn’t a technology failure. It’s a measurement failure.

Scaling AI responsibly: The bridge between ambition and outcomes

Responsible AI scaling means organizations don’t jump from pilot to full rollout without first establishing visibility, governance, cost discipline, measurement frameworks and operational guardrails. These foundations ensure AI is deployed not just ethically and securely, but also effectively.

What responsible scaling looks like

-

Start with a clear value hypothesis

Every AI initiative should anchor to a specific, measurable outcome such as reduced cycle time, improved accuracy, faster detection, lower handle time or cost displacement -

Use KPI‑linked scale gates

Pilots should advance only when they meet thresholds for data quality, model performance, compliance readiness, cost‑per‑unit benchmarks or productivity gains. If a pilot can’t clear the gates, it shouldn’t scale -

Measure value continuously

AI value decays when it’s not monitored. Outputs drift, usage drops, shadow adoption fragments workflows and cost spikes can mask ROI. Measurement isn’t a one‑time check—it’s an operating discipline -

Align FinOps and ITAM

Generally, FinOps governs cloud economics while ITAM governs software and SaaS. But AI spans both worlds. Responsible scaling connects them to provide a unified financial and operational view -

Expand with risk awareness

As governance matures, organizations can scale confidently knowing data lineage is enforced, access is controlled, compliance is monitored, model usage is auditable and shadow AI is declining

The ROI formula for AI: Clarity → Control → Measurement → Value

Across Flexera’s research, organizations that successfully capture AI value share clear patterns:

-

They establish clarity first

They start with full visibility across AI usage, spend, SaaS features, cloud workloads and data pathways. This is the foundation of FinOps for AI: you can’t optimize or govern what you can’t see -

They embed governance early

Usage rules, procurement gates and model policies are put in place before AI reaches scale. These guardrails keep experimentation productive and prevent sprawl -

They define value using shared metrics

Business, IT and finance align on common measures tied to productivity, cost avoidance, accuracy, throughput, service quality and risk reduction. This ensures AI outcomes map directly to business priorities -

They monitor value after deployment

Continuous measurement prevents AI drift—declining usage, output inconsistencies, rising costs and shadow adoption that erode ROI. FinOps for AI treats value monitoring as an ongoing operating discipline, not a one‑time validation -

They eliminate blind spots

Shadow AI can’t produce ROI because it can’t be measured, governed or optimized. High‑performing teams close visibility gaps across cloud, SaaS and AI‑powered tools to ensure every deployment is traceable and accountable

AI only creates value when it can be seen, controlled and measured. FinOps for AI connects financial insight, operational visibility and governance into a single system of record.

Get the essential guide for managing AI’s impact on cloud costs

Vertical snapshots: How industries turn AI into real ROI

Key takeaways

- Each industry faces unique AI challenges, but overcoming visibility, governance and measurement gaps is key to unlocking value

- Organizations achieve lasting AI success by treating it as a disciplined, measured and cross-functional operational practice

- Responsible innovation means scaling AI only after proven pilots, ensuring costs and risks are well managed

Financial services

Financial services organizations face tight regulatory pressure and complex data environments. Their biggest blockers center on data lineage, compliance controls and model auditability. Despite these hurdles, value opportunities are strong: fraud detection, risk scoring and automation of manual processes. Governance maturity is typically high, but so is exposure to shadow AI because experimentation often happens outside approved workflows.

What blocks ROI:

- Strict regulatory constraints

- High sensitivity of customer as well as transactional data

- Complex data lineage and audit requirements

How to realize ROI:

- Enforce model lineage tracking and strong data governance from day one

- Use KPI-based scale gates tied to accuracy lift and false positive reduction

- Integrate FinOps and ITAM to manage AI-enhanced SaaS license creep

- Eliminate shadow AI to avoid compliance violations

Healthcare and life sciences

Healthcare and life sciences must navigate protected health information (PHI) exposure, strict regulatory constraints and growing demands for explainability. These barriers shape a value profile focused on documentation automation, diagnostic support and clinical workflow optimization. Governance-led scaling is the norm, with compliance acting as both a constraint and an enabler of safe adoption.

What blocks ROI:

- PHI sensitivity and HIPAA class data handling rules

- Explainability requirements for clinicians

- Vendor risk and lack of transparency

How to realize ROI:

- Deploy AI behind strong data governance and privacy guardrails

- Use outcome-based KPIs (e.g., documentation time saved, case throughput)

- Require vendor evidence of security posture and model transparency

- Monitor usage continuously to prevent drift or unsanctioned tools

Manufacturing

Manufacturers struggle most with fragmented data and gaps between operational and IT systems. Their AI value targets revolve around predictive maintenance, supply chain optimization and robotics augmentation. While the value story is operationally compelling, risk conversations are dominated by uptime, safety and process continuity.

What blocks ROI:

- Fragmented OT/IT data

- Inconsistent sensor or plant level telemetry

- High cost of AI/ML infrastructure for industrial workloads

How to realize ROI:

- Consolidate plant and cloud telemetry into unified visibility

- Tie AI rollout to measurable reductions in downtime or scrap

- Gate scaling decisions on improved cycle times or mean time between failure (MTBF) metrics

- Control shadow AI to prevent unauthorized automation changes

Retail and consumer

Retailers and consumer brands face challenges managing customer data governance and vendor sprawl across fast-changing tech stacks. Their AI priorities focus on personalization, demand forecasting and service automation. High experimentation drives fast innovation—but also fuels high shadow AI exposure.

What blocks ROI:

- Customer data privacy requirements

- Proliferation of AI-powered SaaS tools across marketing and ecommerce

- High experimentation culture leading to shadow AI sprawl

How to realize ROI:

- Inventory all AI-enabled SaaS to avoid duplicated capabilities

- Use KPI gates tied to conversion, retention and basket size impact

- Implement procurement guardrails for any AI-powered MarTech stack additions

- Centralize model and vendor governance to reduce cost overlap

Public sector

Public-sector organizations contend with procurement constraints, compliance burdens and heightened security expectations. Their value targets include constituent service automation, risk reduction and operational efficiency. Adoption tends to be conservative, but oversight pressure keeps governance tight.

What blocks ROI:

- High compliance burden and slow procurement processes

- Legacy infrastructure

- Skills shortages for AI and data science

How to realize ROI:

- Deploy AI in controlled pilots aligned to measurable service improvements

- Prioritize low risk, high volume government workflows

- Apply strict access controls and audit trails for every AI interaction

- Build small cross-functional AI governance groups to scale safely

AI value maturity: What “good” looks like across industries

Organizations that consistently extract value from AI share a common pattern: They treat AI as an operational discipline, not a one‑off project. AI value isn’t left to chance—it’s deliberately engineered. It all starts with total visibility: those who track every AI model, SaaS tool and cloud workload ensure nothing escapes their view. This transparency lays the groundwork for thoughtful financial management and ongoing optimization.

But visibility isn’t enough. Governance shouldn't be siloed in one department. Security, IT, finance, legal and data teams collaborate, turning governance into a dynamic process that guides innovation and keeps everyone accountable. Instead of slowing things down, this approach makes sure every new AI initiative is aligned with broader goals.

Organizations that adopt operational discipline move beyond experimentation and turn AI into an operating advantage

The best organizations also set clear metrics tied to real outcomes from the start, versus waiting until the end to measure success. Measurement is ongoing, enabling teams to spot opportunities and address issues before they become problems. These teams scale cautiously, only moving forward when pilots deliver proven value and risks are well understood. This disciplined growth ensures that costs stay manageable and benefits are realized, reinforcing smart financial practices. Experimentation is encouraged, but always within transparent boundaries. Teams are constantly refining their approach and making optimization an everyday pursuit rather than a one-off task.

AI value isn’t inevitable.

It’s engineered.

Organizations are adopting AI at extraordinary speed, but its benefits aren’t guaranteed. Many still lack the visibility, governance and measurement needed to manage it. The hype cycle may rise and fall, but the operational pressure created by AI is only increasing.

- AI creates opportunity.

- Visibility creates control.

- Governance creates safety.

- Measurement creates value.

And alignment—across IT, security, finance, procurement, data and business leaders—creates resilience.

Leaders everywhere must shift from AI adoption to AI accountability. That means understanding where AI is used, how it behaves, what it costs, the risks it introduces and the outcomes it produces. The organizations that lead won’t be the ones that adopt the most tools—they’ll be the ones that instrument AI with discipline. They’ll maintain unified visibility across cloud, SaaS and embedded AI features. They’ll govern AI with the same rigor they apply to security and compliance. They’ll treat cost unpredictability as solvable. They’ll measure AI outcomes with KPIs tied to productivity, accuracy, cost avoidance and improved experience. And they’ll scale AI intentionally—only when value is proven and risk is controlled.

Organizations that operationalize AI with visibility and control will lead; those that rely on assumptions will fall behind

Methodology

The insights in the Flexera 2026 AI Pulse Report are drawn from Flexera’s flagship research reports, primarily the Flexera 2026 IT Priorities Report and the Flexera 2026 State of the Cloud Report. These studies represent thousands of data points collected from IT, cloud, finance, security, procurement and IT asset management (ITAM) leaders across global enterprises.

The perspectives in this report are informed by Flexera’s global research and by operational signals observed across cloud optimization and financial management environments. Recent acquisitions of Chaos Genius and ProsperOps further expand this view, surfacing earlier and more granular signals of AI-driven volatility and cost behavior.

Reuse

We encourage the reuse of data, charts and text published in this report under the terms of this Creative Commons Attribution 4.0 International License. You’re free to share and make commercial use of this work as long as you provide attribution to the Flexera 2026 AI Pulse Report as stipulated in the terms of the license.

Want to learn more?

Talk to an expert about how to get the most ROl out of your AI investments

FAQ

What is the Flexera 2026 AI Pulse Report?

The Flexera 2026 AI Pulse Report is Flexera’s inaugural report on AI adoption, risks and hurdles organizations are facing, as well as opportunities for improvement. It utilizes data from Flexera’s other flagship reports, The State of the Cloud and IT Priorities, bringing that data together to provide meaningful insights for the industry.

What does the Flexera 2026 AI Pulse Report reveal about AI adoption in enterprises?

The Flexera 2026 AI Pulse Report shows that AI adoption has moved beyond experimentation and into mainstream enterprise use. Nearly all organizations are now using or actively integrating AI and machine learning into their technology stacks. AI is a top IT priority for 2026, driven by generative AI, embedded AI in SaaS platforms, and growing use across cloud, security, analytics and operations. However, adoption is accelerating faster than organizations’ ability to govern, measure and control AI effectively.

Why are organizations struggling to control AI costs despite increasing investment?

Organizations struggle to control AI costs because AI workloads behave very differently from traditional cloud and SaaS workloads. AI spending is highly unpredictable due to usage‑based pricing, bursty compute demand, GPU‑intensive workloads and rapidly evolving vendor pricing models. The report highlights that many organizations overspend on AI applications or experience wasted AI spend because they lack unified visibility, accurate cost attribution, and governance frameworks designed specifically for AI.

What is shadow AI and why is it a growing risk for enterprises?

Shadow AI refers to AI tools, models and features used without formal approval, visibility or governance. This includes employees using public generative AI tools, SaaS platforms enabling AI features by default, or teams experimenting outside approved workflows. The Flexera 2026 AI Pulse Report finds that many organizations do not always know how or when employees are using AI, creating significant risks related to data exposure, compliance violations, unmanaged costs and intellectual property loss.

How can organizations measure and realize ROI from AI investments?

Organizations realize AI ROI by shifting from experimentation to disciplined, measurable operations. The report outlines a clear path: start with full visibility, embed governance early, align AI initiatives to specific business outcomes, and continuously measure performance using shared KPIs. Successful organizations treat AI value as something that is engineered—not assumed—using financial instrumentation, FinOps for AI practices and ongoing optimization to ensure AI investments deliver measurable impact.

Why is visibility critical for AI governance, security and ROI?

Visibility is the foundation of effective AI governance and value realization. Without unified visibility across AI tools, SaaS platforms, cloud workloads, data flows and vendors, organizations cannot control costs, enforce policies, manage risk or measure ROI. The report emphasizes that visibility enables governance, supports compliance and security, reduces shadow AI, and allows organizations to tie AI usage directly to business outcomes. Simply put, organizations cannot optimize or govern AI they cannot see.